Markov Streets

This week, I will solve 538’s riddler express using two methods: math, and simulation study.

Riddler Express

In Riddler City, the city streets follow a grid layout, running north-south and east-west. You’re driving north when you decide to play a little game. Every time you reach an intersection, you randomly turn left or right, each with a 50 percent chance.

After driving through 10 intersections, what is the probability that you are still driving north?

This problem reminds me of when I used to walk home from work in downtown Chicago before Covid-19. I’d somewhat randomly turn left and right with the traffic lights to get home as fast as I could. This problem is a bit different so lets first visualize the problem by simulating one experiment.

Code to generate gif

In this experiment, the driver started facing North but ended up facing South after driving through 10 intersections. With the computational approach, we would need to simulate the experiment many times and count the number of times the driver ended up facing North. While the computational method is conceptually straightforward, I find that the mathematical approach is actually easier to solve once the theory is understood.

Mathematical – Graph Theory and Markov Chains

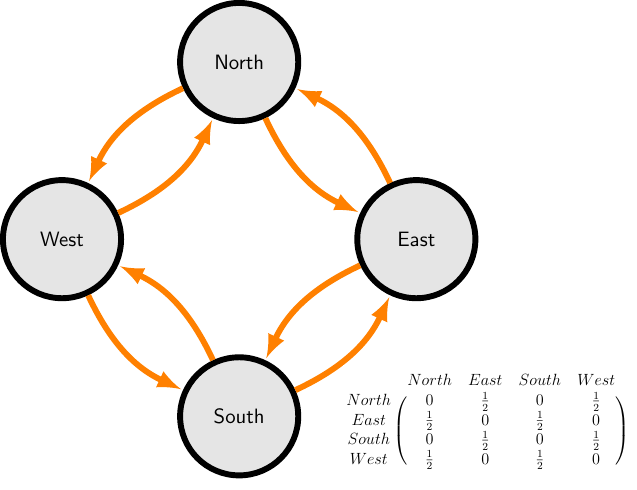

We can mathematize the description with a network graph to answer the question at hand. Specifically, the network graph represents the current direction of the driver as a node and represents which direction the driver can turn to given the current direction of the driver as an edge pointing from one node towards another node. That relationship is presented visually below with either a graph or with a transition matrix (bottom right).

graph – nodes are the current direction of the driver; edges are what direction the driver can turn to.

transition matrix – row is the current direction of the driver; column is direction the driver can turn to.

LaTeX code for graph

A concrete example to read the graph is when the driver is facing north (on the North node), they can either turn West or East (there is an edge pointing from the North node to West node and East node).

There is one more property we need to invoke before we can answer the question. That property is that the probability to where the driver will turn relies only on where the driver is currently facing. In statistical language, this is called the Markov Property and this allows us to simply raise the transition matrix \(n\) times. Hence, if \(A\) is the transition matrix with one step then \(A^n\) is the transition matrix after \(n\) steps. More concretely with our example:

$A^n = $ the probability of the driver will ending up facing direction \(x\) given the driver started with direction \(y\) after driving through \(n\) intersections.

It’s worth noting that we are doing matrix multiplication as oppose to element by element multiplication. In python, A^n is element by element multiplication while np.linalg.matrix_power(A, 10) is matrix multiplication.

We can calculate \(A^n\) in python as:

import numpy as np

# transition matrix

A = np.array([[0, 0.5 , 0, 0.5],

[0.5 , 0, 0.5, 0],

[0, 0.5 , 0, 0.5],

[0.5 , 0, 0.5, 0]])

# A^n

np.linalg.matrix_power(A, 10)## array([[0.5, 0. , 0.5, 0. ],

## [0. , 0.5, 0. , 0.5],

## [0.5, 0. , 0.5, 0. ],

## [0. , 0.5, 0. , 0.5]])So reading the the transition matrix, we want the North row and North column which is the top left item.

Therefore, the answer is \(0.5\).

Extra Credit

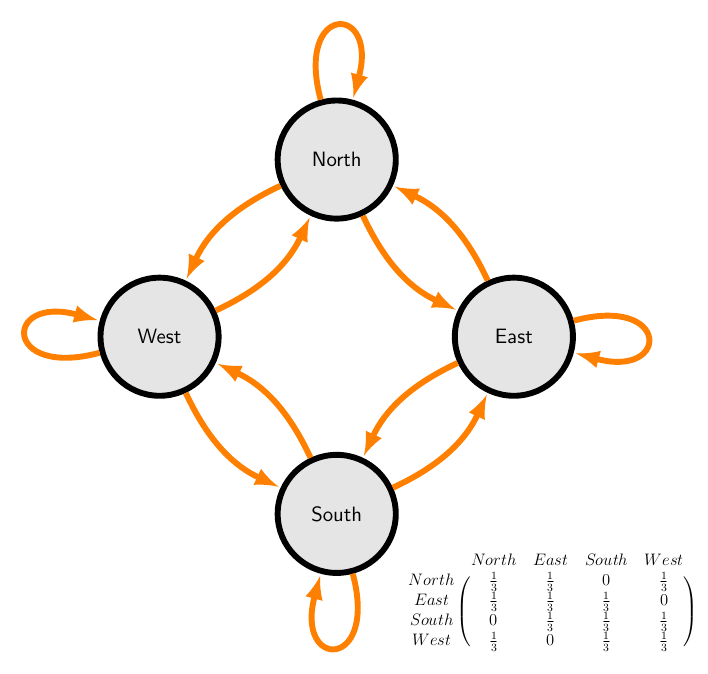

With the theory we have set up, finding the answer to the extra credit problem is straightforward as well. Just plug in the new transition matrix that incorporates the new conditions.

Extra credit: Now suppose that at every intersection, there’s a one-third chance you turn left, a one-third chance you turn right and a one-third chance you drive straight. After driving through 10 intersections, now what’s the probability that you are still driving north?

This example is almost identical to the previous example with the extra twist that now you can drive straight through. Note that if you drive straight through, then you continue to face the direction you were currently facing. In Graph Theory terms, this means that given any state, \(i\), we can travel to that state, \(i\), again. Graphically, that is shown with a loop connecting a node to itself.

import numpy as np

# transition matrix

B = np.array([[1/3, 1/3 , 0, 1/3],

[1/3 , 1/3, 1/3, 0],

[0, 1/3 , 1/3, 1/3],

[1/3 , 0, 1/3, 1/3]])

# B^n

np.linalg.matrix_power(B, 10)## array([[0.2500127 , 0.24999577, 0.24999577, 0.24999577],

## [0.24999577, 0.2500127 , 0.24999577, 0.24999577],

## [0.24999577, 0.24999577, 0.2500127 , 0.24999577],

## [0.24999577, 0.24999577, 0.24999577, 0.2500127 ]])Again, we have set up the transition matrix such that the top-left position is the probability of facing North after 10 steps given the driver started facing North.

That answer is exactly 0.25, \(0.2500127 = \frac{4,921}{19,683}\).

Simulation Study – with python

Let’s say fancy math isn’t your thing, and you want to actually conduct simulate the experiments. In that case, we take similar ideas of state and flow to design our program.

Since we care about the state of the driver, we can create a class… While it might be easy to think we need a class, we can actually answer the specific question with just a couple of functions. We can simply store the current direction of the driver as a variable within the function and just return it at the end. While we lose access to the direction of the driver during the experiment, the question at hand only cares about the end result. This allows us to write very succinct and readable python code.

import random

def experiment(rounds : int) -> str:

direction = "North"

for _ in range(rounds):

if direction in ["North", "South"]:

direction = random.choice(["West", "East"])

else:

direction = random.choice(["North", "South"])

return directionWe need to run the experiment many times to be confident that our results are meaningful and not just due to randomly noise. The main function runs 1000 experiments and then counts the ratio of those experiments to get a probability for each outcome.

from typing import Callable

def main(experiment : Callable, rounds : int, trials : int) -> dict:

experiments = [experiment(rounds) for _ in range(trials)]

return dict(zip(experiments,

[experiments.count(i)/trials for i in experiments]))

main(experiment, rounds=10, trials=1000)## {'North': 0.479, 'South': 0.521}The answer is pretty close to the answer from before, \(0.5\).

Extra credit

Because we modularized the code to separate experiment() and main(), we can define a new function experiment_exta() to replace the previous experiment() and drop it straight into main. Functions are first class so we can store functions as a variable.

import random

def experiment_extra(rounds : int) -> str:

direction = "North"

for _ in range(rounds):

if direction == "North":

direction = random.choice(["North", "West", "East"])

elif direction == "East":

direction = random.choice(["North", "South", "East"])

elif direction == "South":

direction = random.choice(["South", "West", "East"])

else:

direction = random.choice(["North", "South", "West"])

return direction

main(experiment_extra, rounds=10, trials=1000)## {'North': 0.228, 'East': 0.263, 'South': 0.228, 'West': 0.281}The answer is close to the mathematical answer, \(0.2500127\).